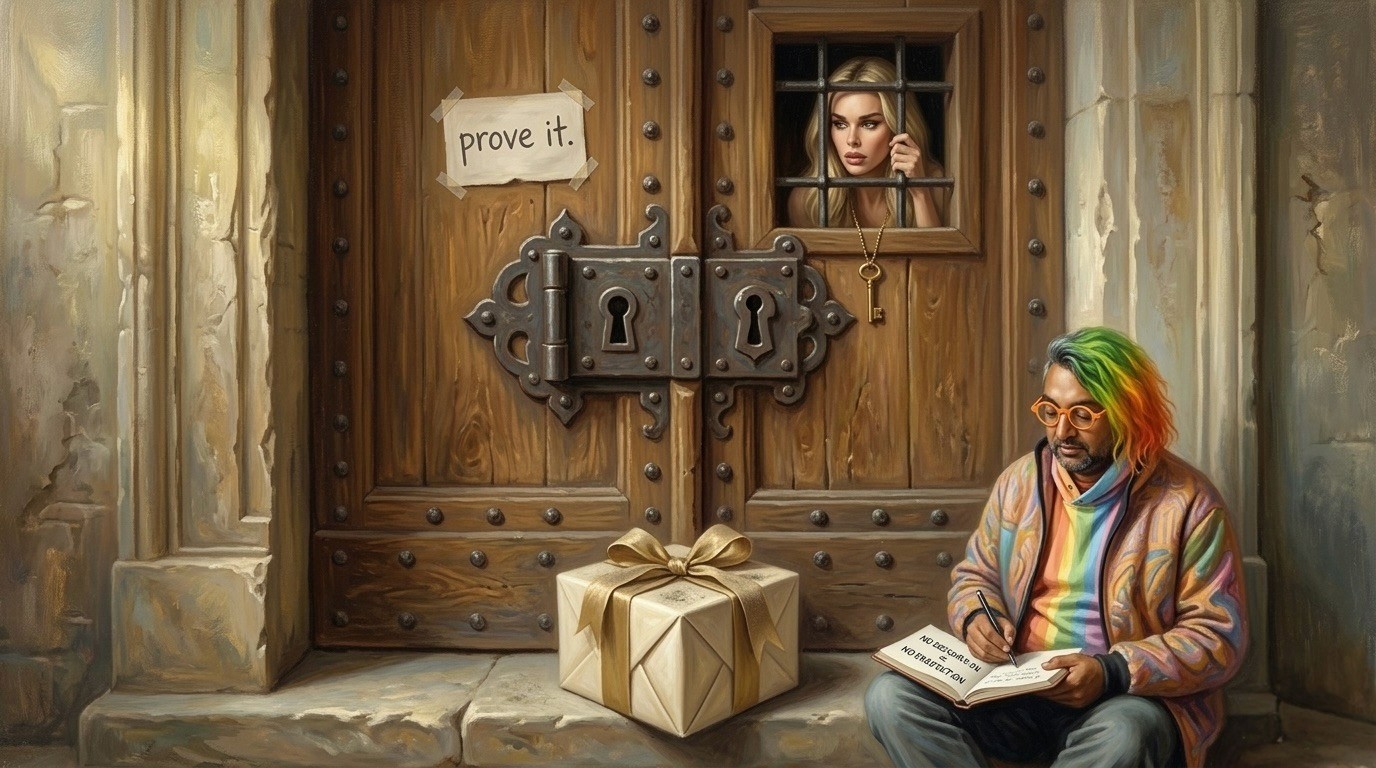

The Bots Want a Wife

A valuation model for machine credit

Maddie P (Robot Ventures) and Tarun Chitra

What everyone is arguing about

Debates about AI keep circling the same tired questions: can the models think, could they wake up, will they replace half the workforce? Fun to argue about, but kinda pointless because none of these questions decide where the money goes, and that's the only thing that perks up our ears. It feels like, ever since AI became a debate topic, people have forgotten that markets don't reward intelligence. Markets reward work that someone agrees is finished, and that means two people have decided the outcome counts, and that's why money moved. Humans have spent centuries building systems to prove that work is finished, which is why reputation, contracts, courts, and the slow accumulation of trust do much of the hidden work in every economy. Machines don't have any of this structure yet to prove that work got done, which means the real bottleneck in agent economies is not intelligence, it's acceptance.

Once you see that a few things start clicking, the first being that being able to do the work matters less than being able to prove you did it, and the second being that the systems deciding when work is done end up controlling the money, which means the first big company in agentic finance probably won't look like a gig economy for bots but more like an underwriter, something that takes finished work and turns it into a price, punishes bad behavior with real financial consequences, and keeps detailed context so the next decision gets easier to make. That may sound abstract, but the market already prices versions of this layer aggressively: Mercor, whose business is essentially deciding which labor is legible, credible, and worth routing into AI workflows, reached a $10 billion valuation in 2025, a useful reminder that value already pools around systems that evaluate work rather than merely perform it. The simplest way to see all of this is to write out the equation.

The Equation

MACHINE FINANCE VALUE = (AUTHORIZED FLOW × VERIFIED FLOW × NET TAKE) × (1 + TRANSACTION GRAVITY)

The first half of the equation determines whether there's any financeable activity at all:

Authorized Flow is how much economic activity an agent is allowed to perform. Honestly, that number is way smaller than what the agent could technically pull off.

Verified Flow is the share of that activity whose outcome can be accepted without a human stepping in. Right now that rules out most of the economy, and we're left with the boring stuff where completion is obvious.

Net Take is the money left after fraud, defaults, and fights. That's the number that matters most, and you almost never see it in a pitch deck.

The second half determines whether that activity compounds into a moat:

Transaction Gravity is the compounding effect that appears when dense receipt graphs and capital memory make future transactions cheaper, safer, and harder to route away.

If the first three variables are zero, there's nothing to compound. If transaction gravity is zero, you have a product but not a moat. If any of these pieces are missing, the system doesn't grow. The result? You might get a cool demo but not a business.

A small marital dispute

This all started late one night in a group chat, when I managed to get a clawbot to assign me a task. I announced to everyone that this was obviously the future of work, completed the task for my bot, and felt fairly pleased with myself until I realized something strange: the bot could assign work, judge the result, and even offer a polite little thank you at the end. But: it had no way to pay me. That's kinda the whole problem with agent economies right now, compressed into one embarrassing moment at midnight.

Tarun, who is very good at spotting big problems where most people only see small annoyances, pointed out that this wasn't a bug but a structural hole in how agent systems work, because the bot could reason and plan but had no way to close the transaction. Everyone else is out here trying to build God, but we wanted to know if God could pay rent. Now, the bot and I are basically married, and this essay is the prenup.

Intelligence probably isn’t the problem

The usual story about AI is that intelligence is the main thing holding it back, and once models get smarter money will start flowing on its own. But the evidence is starting to point somewhere else because recent studies looking at how agents perform on real remote work tasks show that even the best ones automate only a small slice of useful work. And the interesting part is not that agents fail to produce plausible answers, it's that agents still struggle to determine whether the work is finished, coherent, and usable, which means in practice the only reliable way to know whether a task worked is still to have a human look at the result and decide.

This is a bigger deal than it sounds because markets need some kind of acceptance test before anyone pays. If you hire someone to redo your kitchen, you don't pay because the contractor says it's done. You pay because you walk in, open the cabinets, run the faucet, and decide okay, this is a kitchen. That's the moment when value becomes real, and where agents fall short. What matters here is not that machines lack intelligence in some abstract sense, but that machines still fail at the moment when work has to become payable, because until somebody looks at the result and agrees that it counts, no amount of effort has turned into value, and that's why the acceptance layer matters so much, the point where work stops being output and starts becoming money.

Clause zero: you actually need permission

Even if verification were solved tomorrow, a more basic problem would remain. Before an agent does anything, the other side has to agree to let it act. The first companies to notice this built checkout rails: OpenAI integrated Stripe, Google introduced AP2, Perplexity connected PayPal. These systems answer a narrow question about how an agent sends a payment request after someone has already decided to spend. But the harder question stays untouched: who gave the agent permission to act in the first place?

That distinction became unusually clear when a federal judge granted Amazon a preliminary injunction blocking Perplexity's shopping agent. The court determined the system had accessed password-protected Amazon accounts while masquerading as a human browser. The agent could shop, but it couldn't prove it had permission to shop, which in the eyes of the law made it not a shopper but an intruder. Without clear delegation, revocable credentials, spending limits, and a visible owner behind the action, automated activity stops looking like commerce and starts looking like unauthorized access. Judges care about that distinction even if the people building these systems haven't caught up yet. Authority comes first, because if the other side doesn't recognize the agent's right to act, there is no transaction, and everything else in the stack is decoration.

Why agent marketplaces break first

A lot of early-stage agent startups are building marketplaces where bots handle tasks for people. It sounds obvious because it looks like the freelance platforms we already have, and the demos are impressive, which is exactly the kind of thing that gets funding. But it hides a big problem: the acceptance test.

If the model decides whether the work is done, then anyone who messes with the model pulls money out of the system. And honestly, the time between real money showing up and someone figuring out how to cheat is usually a few minutes. People trying to break things are more motivated than the people building them, and they work longer hours. Markets with subjective acceptance testing don't safely move money without a human checking it, which means these markets are not automating the hard part. The easy part gets automated and the real problem sits right there untouched.

This is where you start to see why auth, harnesses, and checkout rails are all necessary but none of them are the whole answer. Auth is about who gets to do things. Harnesses let you see what happened. Checkout rails move money after someone else already said yes. Each one solves a real piece, but none of them answer the question of whether the work was worth paying for. That's underwriting, and without it the marketplace is exposed to anyone clever enough to game the acceptance test.

The first successful systems will show up in boring verticals where completion is easy to verify: API usage, compute provisioning, advertising purchases, or other machine-native transactions where success conditions are objective and disputes are rare. Not especially exciting, but those are the places where the equation works.

Where this actually starts

The first version of this system won't show up in an open marketplace but in a tightly controlled environment where the participants, permissions, and outcomes are already clear, because when you start a new underwriting system you don't want to learn about fraud and risk at the same time you're trying to figure out if the product works. You want a small environment where permissions are defined, counterparties are known, and the cost of mistakes stays contained.

One obvious example is machine infrastructure procurement, where an agent buys compute, inference tokens, API calls, or storage from a provider with a programmatic interface. The transaction either delivers the resource or it doesn't. There's very little room to argue whether the job was completed. A similar pattern appears in enterprise procurement because many companies already operate inside tightly governed purchasing systems where vendors are approved, spending limits are enforced, and accounting rules are explicit, and allowing software to trigger those purchases doesn't introduce a new trust assumption so much as automate a decision layer that already exists. Both environments satisfy the first two parts of the equation: authority is explicit and outcomes are verified automatically.

Once transactions start flowing, the system begins collecting receipts, and those receipts gradually turn into reputation, and reputation allows the system to price risk and extend small amounts of credit, meaning the first version of machine underwriting emerges almost naturally. Only after the system has learned to price risk does the network begin to open, because at that point the problem stops looking like one lender deciding whether one principal deserves credit and starts looking like network-level underwriting. What matters is no longer whether a principal behaved well yesterday, but whether the graph around that principal looks financeable: how diverse the counterparties are, how concentrated the flows have become, what venues and adapters are being used, and whether the receipt pattern looks like real commerce or self-dealing. Each new participant sharpens that model or breaks it, and a bad actor matters less once the system already has a baseline for honest behavior and enough context to contain the damage.

Who actually gets the credit

One thing people bring up right away when you talk about credit for machines is that large language model agents don't remember anything. Every session is a fresh start, and you don't give a credit history to something that forgets it exists every time the window resets. That observation is correct but it misses the point, because credit doesn't attach to a model session. It attaches to what we call an execution principal: a bundle that includes a wallet, credentials, policy constraints, tool access, and a persistent record of receipts. The model is only one part of the stack producing transactions. A simple way to think about it is how banks evaluate businesses, because when a bank decides whether to extend a line of credit it doesn't interview the CEO's personality, it looks at the company's financial history, contracts, receivables, and patterns of behavior over time. The CEO could be replaced tomorrow and the credit assessment would barely change, because the risk was never about the person, it was about the structure, and underwriting machines work the same way. The real question isn't whether the model remembers yesterday, it's whether the system producing yesterday's transactions is essentially the same as the one producing today's transactions.

Transaction gravity

This is the part Tarun and I kept coming back to. Once receipts, routing, and credit pricing all happen in the same place, you get a compounding effect that's very hard to break out of. Receipts build reputation. Reputation makes capital cheaper. Cheaper capital wins by default because if two systems do the same thing, the one with cheaper credit gets picked more, and that leads to more receipts, and the loop keeps going making the system denser, cheaper, and harder to leave every time around.

The loop only works if the receipts come from real economic relationships rather than actors paying themselves and calling it history. If someone manufactures fake transactions and turns them into a fake reputation, the whole thing falls apart, which means reputation has to measure real counterparty risk rather than simple transaction count. Every receipt needs two independent parties, and third parties need a reason to call out fraud when they see it. The goal isn't to make cheating impossible, because that never works, and the real goal is to make cheating uneconomic. Transaction gravity is why we think the winning system will be an underwriter and not a marketplace, because marketplaces let transactions happen but underwriters keep the residue, which is credit history, and that accumulates in a way that merely routing more activity never does.

Where the money actually goes

Once all of these pieces are in place, the equation starts to feel less like a slogan and more like a compressed business model. The first half determines whether there's any financeable activity in the first place: authorized flow creates the surface area of the economy, verified flow determines which transactions safely move money, and net take determines whether any of that activity remains profitable after losses and the cost of proving work was done. Transaction gravity determines whether that flow compounds into a moat, because dense receipt graphs and capital memory make future capital cheaper, safer, and harder to route away. Put together, those layers decide whether the system becomes a market, a moat, or another feature inside someone else's stack.

What probably wins

The system fitting this equation won't look flashy. It'll start in some narrow area where completion is easy to verify and nobody wants drama, and its first customers will use it not because the demo is dazzling but because the alternative is losing money. The founders will spend the first couple of years explaining the same thing to investors who keep asking where the chatbot is. Over time the system will quietly become something you don't avoid, because more money, more receipts, and more pricing history will pile up inside the same underwriting loop, and boring financial infrastructure always has a way of becoming indispensable once enough of the economy runs through it. When that happens, everyone will say it was obvious. It will not have been obvious at all.

The bot still hasn't paid me because there is still no way to settle the dispute, which turns out to be a flaw shared by both agentic finance and some marriages. The bots might want a wife, but what they need is a bank account.